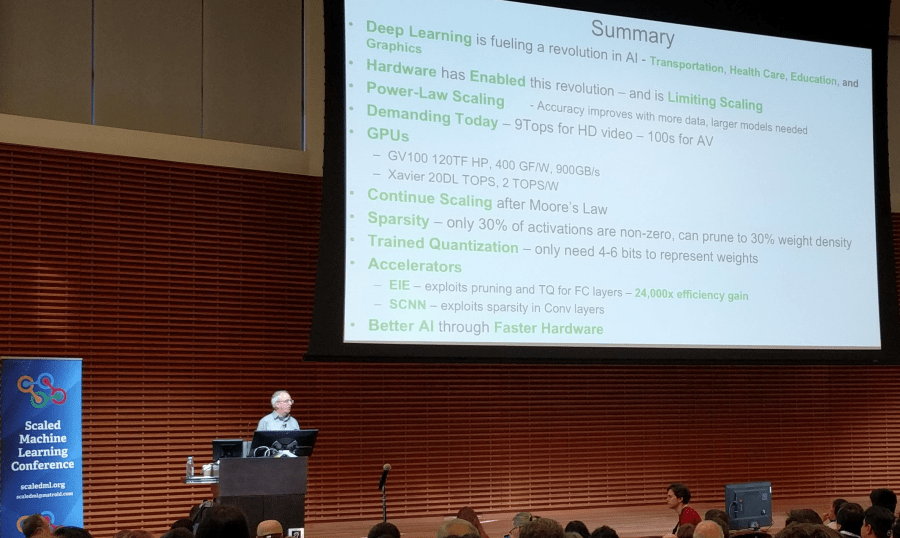

Bill Dally, Nvidia

Matroid and the Stanford center for image system engineering ran the 3rd year of the ScaledML conference yesterday, 24 March 2018. It was a concentrated survey of work in progress in machine learning, with admirably little overt advertising. The overall impression is of enormous actual potential, orthogonal to enormous hype and inflated expectations, significant uncertainty about what will actually get done, and of a lot of work in progress on necessary infrastructure in hardware, architecture, languages, systems, and education.

Agenda and speaker list

08:45 – 09:00 Introduction Reza Zadeh Matroid

09:00 – 10:00 Ion Stoica Databricks

10:00 – 11:00 Reza Zadeh Matroid

11:00 – 11:30 Andrej Karpathy Tesla

11:30 – 12:00 Jennifer Chayes Microsoft Research

13:00 – 14:00 Jeff Dean Google

14:00 – 14:30 Anima Anandkumar Amazon

14:30 – 15:00 Ilya Sutskever Open AI

15:00 – 15:30 Francois Chollet Google

16:00 – 17:00 Bill Dally Nvidia

17:00 – 17:30 Simon Knowles Graphcore

17:30 – 18:00 Yangqing Jia Facebook

From my notes :

The successor to the AMP Lab at Berkeley is RISE lab, building Real-time Intelligent Secure Explainable applications to make low-latency decisiongs on live data with strong security. (Ion Stoica). Note the remark about Explainable; this came up as a common theme.

Being able to examine detector errors and mistakes came up again in Reza Zadeh’s Matroid demonstration – this was the only live product shown. A user can build a detector with multiple attributes to pick out images from streaming video.

Bill Dally (Chief Scientist, Nvidia) reckons that Moore’s Law is dead; Simon Knowles (Graphcore) gave a more reasoned explanation about possible performance gains from hardware improvements over the next 10 years.

References

Agenda http://scaledml.org/

RISE Lab https://rise.cs.berkeley.edu/

Graphcore hardware, use of BSP – Simon Knowles https://supercomputersfordl2017.github.io/Presentations/SimonKnowlesGraphCore.pdf

Jeff Dean’s slides https://www.matroid.com/scaledml/2018/jeff.pdf

Bill Dally’s slides https://www.matroid.com/scaledml/2018/bill.pdf

Anima Anandkumar https://www.matroid.com/scaledml/2018/anima.pdf

Ion Stoica https://www.matroid.com/scaledml/2018/ion.pdf

Francois Cholet on Keras https://www.matroid.com/scaledml/2018/francois.pdf

Ilya Sutskever https://www.matroid.com/scaledml/2018/ilya.pdf

Jennifer Chayes https://www.matroid.com/scaledml/2018/jennifer.pdf

Yangqing Jia https://www.matroid.com/scaledml/2018/yangqing.pdf